Hi all there! I am glad to be participating for the first time in this forum, and as it couldn’t be other way, I have a very noob question I hope someone could help me understand and tackle a better solution.

I have scanned through the related topics and I know there have been many approaches and discussions regarding the topic of scaling non-overlaying corpora. From reading most of them I have come to the idea that there’s probably not a straight forward solution nor a universal one for every project. Unfortunately, I understand very little of maths and only the basics of AI/machine learning solutions to be able to work around a solution by myself. So here are some of the characteristics of my project just in case someone can think of a possible approach:

I am writing a piece for doublebass-flute and live electronics. I intend to do some live mosaicking with concatenative synthesis. The corpus I am using is pretty varied, all sounds coming from playing around, hitting, bowing and scratching a cheap aluminium pizza plate (and captured with a contact and condenser mic).

To ensure that most of the corpus is available for playing despite a direct match, I intend to scale it.

In order to do this I was thinking of:

- putting the analyzed corpus (descriptors and mfcc) into fluid.robustscale~ (as I read this would be better for non uniformly distributed data)

- doing the same with a analyzed target audio from the flute

- training a regression engine to fit the scaled flute descriptors with the raw flute descriptors

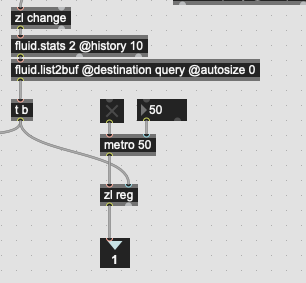

- feeding the live input analyzed descriptors to the regression engine and finally using this to find a match with fluid.kdtree~

There’s probably more than one conceptual mistake here so I apologize for the very noob question. I also apologize for duplicating topics. I felt my question was way simpler and steeper than all the exchanges that took place here and so I thought of begging a new one not to mess up the other ones.

I hope someone could give me a hand here!

Thank you very much and greets!