Putting this in the secret forum cuz it might have to do with fluid.dataset~ instead, or as well.

I was working on a patch for this thread and got an instacrash when doing some fluid.bufcompose~-ing.

Attached is the crash report, but here’s the zesty bit (I think).

Thread 23 Crashed:

0 org.flucoma.${PRODUCT_NAME:rfc1034identifier} 0x0000000124a47478 fluid::client::Result fluid::client::BufComposeClient::process(fluid::client::FluidContext&) + 1000

1 org.flucoma.${PRODUCT_NAME:rfc1034identifier} 0x0000000124a46b70 fluid::client::NRTThreadingAdaptor<fluid::client::ClientWrapperfluid::client::BufComposeClient >::ThreadedTask::process(std::__1::promisefluid::client::Result) + 64

2 org.flucoma.${PRODUCT_NAME:rfc1034identifier} 0x0000000124a4699d fluid::client::NRTThreadingAdaptor<fluid::client::ClientWrapperfluid::client::BufComposeClient >::ThreadedTask::ThreadedTask(std::__1::shared_ptr<fluid::client::ClientWrapperfluid::client::BufComposeClient >, fluid::client::ParameterSet<fluid::client::ParameterDescriptorSet<std::__1::integer_sequence<unsigned long, 0ul, 0ul, 0ul, 0ul, 0ul, 0ul, 0ul, 0ul, 0ul, 0ul>, std::__1::tuple<std::__1::tuple<fluid::client::InputBufferT, std::__1::tuple<>, fluid::client::Fixed >, std::__1::tuple<fluid::client::LongT, std::__1::tuple<fluid::client::impl::MinImpl >, fluid::client::Fixed >, std::__1::tuple<fluid::client::LongT, std::__1::tuple<>, fluid::client::Fixed >, std::__1::tuple<fluid::client::LongT, std::__1::tuple<fluid::client::impl::MinImpl >, fluid::client::Fixed >, std::__1::tuple<fluid::client::LongT, std::__1::tuple<>, fluid::client::Fixed >, std::__1::tuple<fluid::client::FloatT, std::__1::tuple<>, fluid::client::Fixed >, std::__1::tuple<fluid::client::BufferT, std::__1::tuple<>, fluid::client::Fixed >, std::__1::tuple<fluid::client::LongT, std::__1::tuple<>, fluid::client::Fixed >, std::__1::tuple<fluid::client::LongT, std::__1::tuple<>, fluid::client::Fixed >, std::__1::tuple<fluid::client::FloatT, std::__1::tuple<>, fluid::client::Fixed > > > const>&, bool) + 365

3 org.flucoma.${PRODUCT_NAME:rfc1034identifier} 0x0000000124a4d297 fluid::client::NRTThreadingAdaptor<fluid::client::ClientWrapperfluid::client::BufComposeClient >::process() + 231

4 org.flucoma.${PRODUCT_NAME:rfc1034identifier} 0x0000000124a4d04f fluid::client::impl::NonRealTime<fluid::client::FluidMaxWrapper<fluid::client::NRTThreadingAdaptor<fluid::client::ClientWrapperfluid::client::BufComposeClient > > >::process() + 95

5 org.flucoma.${PRODUCT_NAME:rfc1034identifier} 0x0000000124a4c1f6 fluid::client::impl::NonRealTime<fluid::client::FluidMaxWrapper<fluid::client::NRTThreadingAdaptor<fluid::client::ClientWrapperfluid::client::BufComposeClient > > >::deferProcess(fluid::client::FluidMaxWrapper<fluid::client::NRTThreadingAdaptor<fluid::client::ClientWrapperfluid::client::BufComposeClient > >*) + 486

I’m also getting a bunch of errors which I don’t think should be the case too.

fluid.bufcompose~: Source Buffer Not Found or Invalid

fluid.bufcompose~: Zero length segment requested

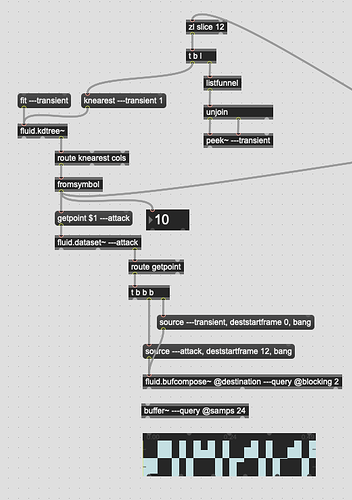

Actually looking at the relevant part of the patch:

I wonder if there’s something going on with how fluid.dataset~ “writes” to a buffer when it gets a getpoint message.

From the looks of this section of the patch, I should never get a “Buffer Not Found” error (or a crash).

I don’t know if it works this way at all, but how does fluid.dataset~ handle buffer writing? As in, does it do it in one of the @blocking modes? Is it possible that the @blocking 2 from the fluid.bufcompose~ further down the stream is accessing the buffer at the same time that fluid.dataset~ is (if it’s happening in a lower priority thread or whatever).

(if that’s the case, I’ll make a separate feature request for threading options for fluid.dataset~ as getting data out in a timely manner is pretty important.

Full crash report:

crash.zip (42.1 KB)