![]()

In my experience @rodrigo.constanzo is right. Also @rodrigo.constanzo has some great videos, many of which you can find on this discourse, and posts on this discourse that model the strategy of “knowing your data” by doing a lot of plotting, listening, and tweaking to really know what the audio analyses provide, what you care about as a listener/composer, and how to connect those two realities.

MFCCs tend to be a good general-purpose starting point. Also, it’s often not too much effort to do more analyses (spectral shape, pitch, loudness, etc.), then plot, listen, etc. and see what makes sense for you.

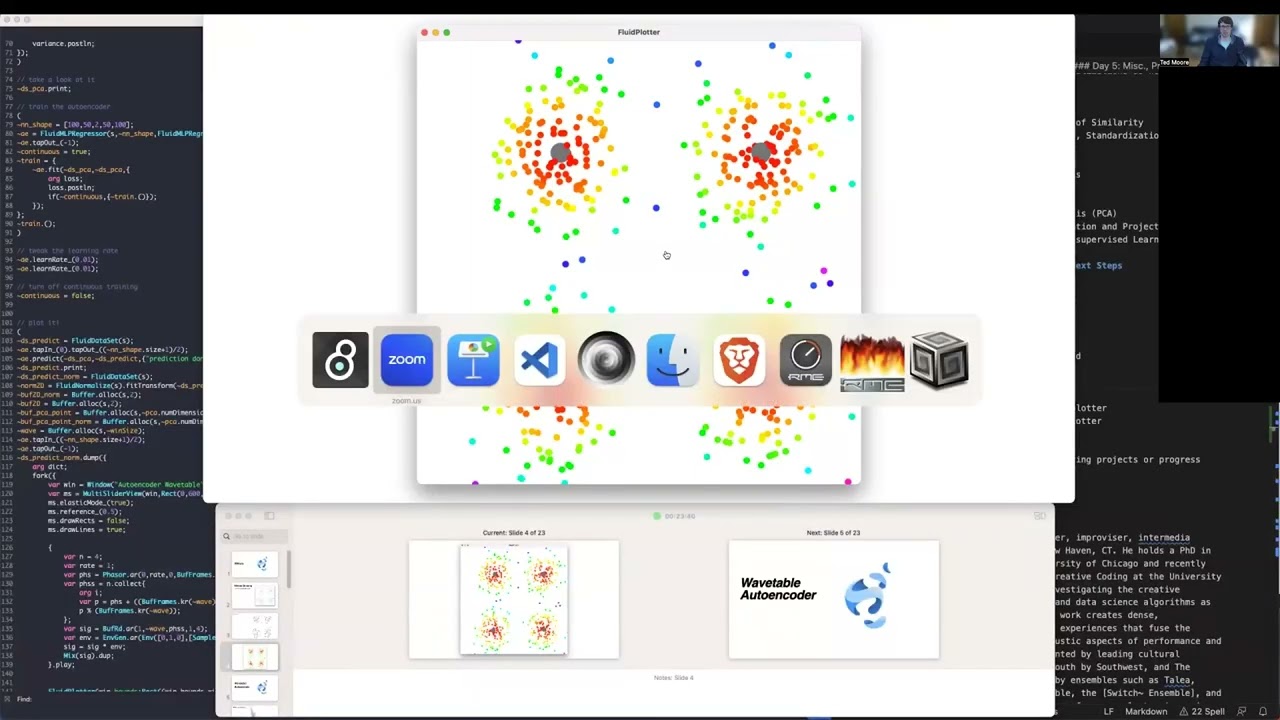

I would like to make the corpus as small and potent as possible. For instance, loading new audio as I collect it and incorporating slices from it where there are gaps in the corpus, and thinning out denser clusters.

Something like this might get you going in that direction: