Dear all

Here is a list of potential ideas on how to play with the LPT example provided and discussed about in yesterday’s presentation. It was not recorded and maybe some of them did not come across clearly, so this is a brainstorm. Feel free to post your additions and/or implementations and/or results in this thread

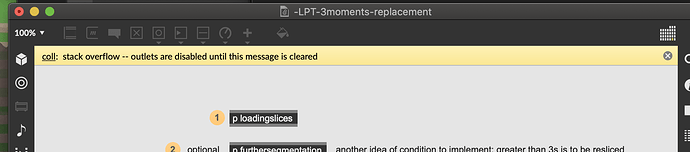

Caveat: I should have done an abstraction for the analysis process since it happens everywhere exactly the same. I am saying this because if you change parameters, you’ll need to change them in the various places, but to start exploring nearest neighbours you get, that is only in 2 places (the analysis and the top layer of nearest)

Low-hanging fruits (results within seconds)

Time-based and somehow interrelated:

-

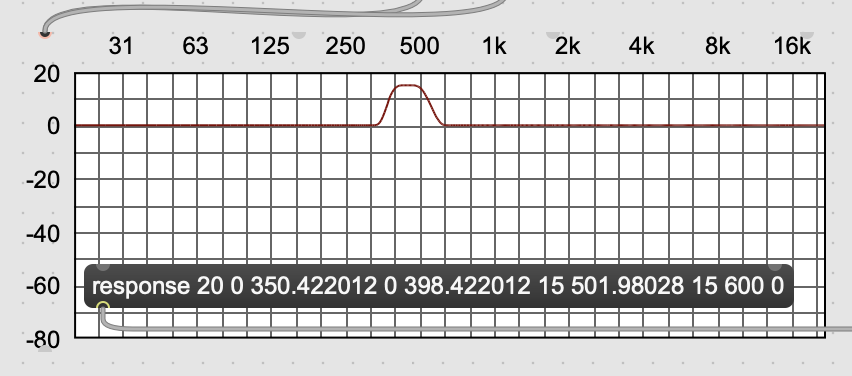

changing the fft settings at the moment all descriptors run at 2048 window size and 512 hopsize. changing those could be fun, but they need to be changed also in the stats bundling (which is in hops)

-

stats bundling in the single message above bufstats you have 3 bangs preceded by 3 time windows. They are explained in the comment on its right.

-

how long is analysed at the moment there is a minimum of 200ms duration and an absolute duration of 500ms (zero paded or truncated depending on the source lenght). These are very personal biases you can change easily.

Value-based and again somehow interrelated:

-

the threshold of validity In the javascript code, one could change quickly

- the threshold of silence,

- the threshold of pitch confidence

- the threshold of centroid confidence.

- the values on invalid entries again in the javascript, one could change the values of each entry when declared invalid.

Mid-hanging fruits (a few hours of fiddling)

- One could change which stats are being bundled. At the moment they are mean, std of the values, and mean and std of their first derivative (how they change in time), for each 3 sanitised descriptors, for each time slot. That makes 4 stats x 3 descriptors x 3 timeslots = 36 values per point/entry/slice. The first thing to try would be replacing the stats by mean/std, 25%, 75% for instance, or mean, 25, 50, 75. That way you keep the same number of points, dismissing derivative but taking into account 2 statistical measurement. The ‘derivative’ could be considered almost covered by the 3-time bundling…

what is the next fruit ripeness metaphor? Blooming flowers? (a few days of fiddling)

- one could explore how subsets could be done via datasetquery conditions, before finding the nearest neighbours.

- one could weigh the various dimensions by multiplying the distances (inverse logic here, the small multipliers make distances smaller so that descriptor is likely to trigger nearest neighbours)

- one could remove outliers altogether in the sanitisation code (but beware of empty slots and stats in time bundling… maybe a buffer per time slots would be heathier then)

that one smell more of pollen than flowers or fruit…

- one could use lpt to generate analysis, and then use the discrete 1D patch to arbitrarily curate a single dimension proximity…

Let me know if I forgot anything we talked about!

p