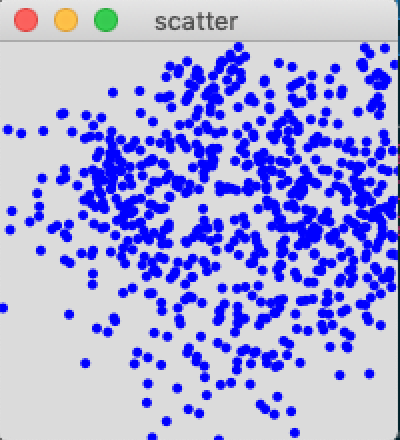

As part of my attempt to move some of what I’ve been doing into ML land (and after having a fruitful geek out with @jamesbradbury) I wanted to try to use dimensionality reduction in a way that still “made sense”.

That is, my understanding so far is that once you go into dimensionality reduction land, you give up the ability to have numbers that relate to real-world units or have “meaning”.

I remember @tremblap mentioning he wanted a better “timbral” descriptor for his LPT idea, and wanted to have a way to have 12 MFCCs reduced down to a single number which better represents the overall timbre than centroid+stats. MFCCs are intrinsically weird numbers though, which I’m sure complicates this a lot.

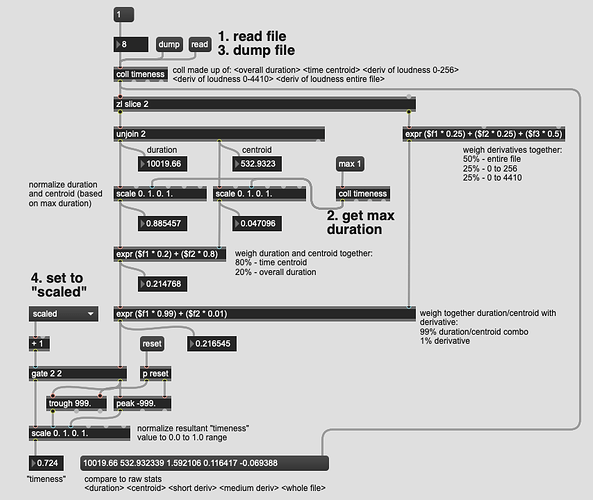

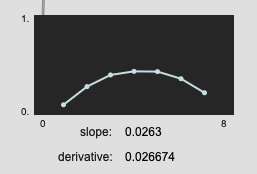

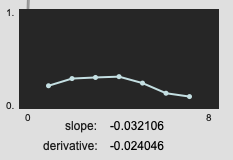

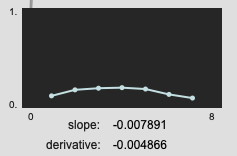

So I thought about trying to reduce a bunch of my metadata-esque “timeness” units. Mainly things like duration and time centroid, and potentially things like the derivative of loudness (and perhaps at several time scales). I had initially posted some thoughts about this here, but that was before we had the dimensionality reduction tools.

My hypothesis (and goal) is to have a single value that corresponds with how “long” a file sounds, by weighing the actual duration, along with the time centroid and derivative of loudness. That’s the hope at least.

/////////////////////////////////////////////////////////////////////////////////////////////////////////////

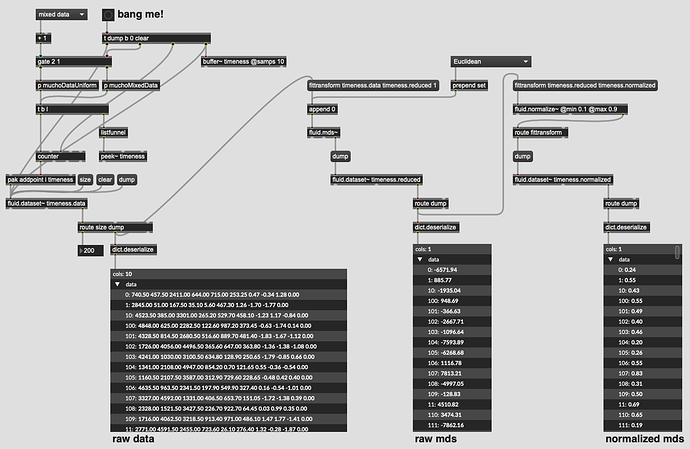

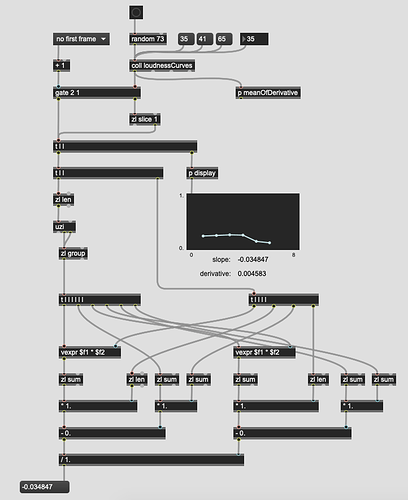

So I’ve whipped up a test patch, partly for me to make sense of this workflow, but also to see what kind of results I would get.

It creates a fluid.dataset~ filled with units that correspond with milliseconds (0-5000ms) as well as one that corresponds with milliseconds along with some derivative-like units (-2.0 to 2.0).

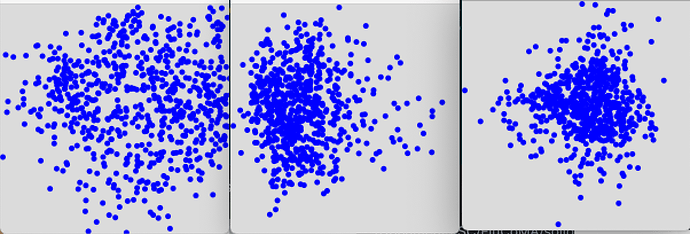

I purposefully didn’t standardize/normalize the data before fluid.mds~-ing it, since I wanted to keep some differentiation in scale between the different numbers (perhaps a big mistake). I kind of hoped (and falsely intuited) that the numbers that came out would be roughly in the same range/domain as what goes in.

That is most definitely not the case…

Normalizing the data afterwards obviously doesn’t help either.

I tried fluid.standardize~-ing the data pre-fluid.mds~, but I’m getting nans and (end) as the values everywhere, and I don’t know why (separate concern/issue).

So I wanted to post the code, but also ask about this general approach, of using dimensionality reduction to create macro/meta-descriptors which fuse together related data, which is then queried/matched in a contextual way. (As opposed to shoving all the random numbers in a black box and hoping what you want comes out (which I’m not opposed to I guess ))

----------begin_max5_patcher----------

3536.3oc6c88jihaD94Y9qf3mRpLGAIgDPd5RkKUkpR1mRkm15povFrG1ECN

7icm8t51+1iPBvBavVXK4AOGmqyiWIvp6O0cqVBoO+qO9vhkouFluv3uZ7Qi

Gd3We7gGXEUUvC0+6GVr0+0Uw94rKawpzsaCSJV7DuthvWKXkmjls0ON5WBC

L1Fj2T8trvb5U6WDkl7bbTR3pzxD1M.quh0oIEI9aCYeI+yv3uDVDsxu49iB

Xkmt7S+fMXgvsr1eE6V.0kkTtMJINrH+fBSKKZJ0R31yoBJSJrLaJdmewpWh

R17bV3pBNh..Ntz5Mbwfp+.Hr+.IlVF+b0M8aO9X0aOckPWl+WEwL4QDqaNh

3BrD.DOKsgGA9E9iFPPd2b.ABwlNXUgHaCyy82DdDhDTtcWSgbws3a6B4Rvh

EF+bOPAZQepMrW0FbBW.KKTkJgrvlt.wWHZgdLsEdgZaPzpByuDE90d5JgvE

iua6Dtv1tDlKLFxzFB5Bk4jvuREui6fpTk.ZrtrHVPvA6rptPZrP+ru0a2FD

MB0FHgZaCMcf6eQ7.sFoP0BAYTIKz3zVpOMf0JzYDVqvyq1HOGSKOgWDpKJw

SGp853xn.ypvU4gEe2nHhFRi5Eate7vAgik9Iapfj0wo9EChM.2QXRfjvSlF

oxFK7B4Q8K7ruJvYn.WqiJJx7SxWSQi8XSVXP4JZhBiAr52tAn1nb.hqonYi

GhZ2.c0n+hH.cA9MVJxuoV+gDKyNA4ccnfhki9bbZ64+twOR0.CZ6S+f+qzO

3csNNdp0wABrME8avDPEp.0A3PyadWXRfAMlx37H.D0L.hq6vNDNZQk82wzX

qQpuX0nuDKhIDOPmL95F4njFkqbP0JJgaB20LtHba8zxV7A+jW7KJ7Sptjmp

d6eTtJNJHTnj+y+qzmFU033Z9PTR5Wy+bjwG7esmBi1ek+qx33k9q9rw+NLZ

YXlweLIsv3agE+o1q3umlSmBW+8C1sS4yOilqdQX1ygI9KiCESP65bDarIgH

SnXRMtU40QtptnglJRUjFisg+gQOUD.71OUDDCCP7Ih3bUSD4JMYKSh3CnQS

3kMQtZCnsQuVMDeco8ZGYcCri3yAf8NM3tNBl8mM.mD85S08TStLbsywwjfD

dYS0QOSr50zM9zrXfmPcGb7YW0j6BWe6MnfsVRbYmw1xUuj9STS3+aic94Vs

KznFTyVQigCGbHbfXZKheCgY0.RMhPC.EEG9kvrbpNIzdOTMdsPwOHbKUv3m

RYeQtO0VDcHmLwufGVjE9knl6G2VpeFUOJnJQYFOL6qjF7n5qIMHLKoLh8Mw

Kj1gVKRrttpvy46pC3x5gapdOxvGPGXwlzCcRir+IFZuGMn1FahSW84v.g3N

z9OZNJQIh8ycpNHbseYbwyhAsAPydquYLgdqrcLl+V0BJzpAaxhBRSpDhNcE

UE2zbz7Uw7U5PTYXWQh+tdtYpAGEWFnxbpRVluzOqpmpNHLroxhzz3tU0dew

gqKpqdWTRxAnXQ5tgqLKZyKm3dWlRqb6o9tY0j+bYBu1moFEEOm6+ktncgeb

bsCd2u9W8Sh1RCpUM4T9ftsUxGH5k7UYowwczWdMeomZBnF4qB+ZTPwKrFRz

Xfd4Q6ZLhVz1KGDsILunaYE9ax6VRdw23ftPQkKqchelNX7tXpVz8BntGQ4E

4uPS9q9BaLzDAf8OP.QmZwnkcJ+TQM6F4LekebHEBL.lUuioi8JdcCGbbvY4

bbTRR2JNNR4vSyCvV6HXcXy5I80N3wCMCfTGyQ8vyeoJajygI747NHv3LHv.

uZfAg4gIu03RleRP5VN3bRrQLypAWFMMfL.OFl3Rt0Hi5bn7TuCkq28u+DzR

8VMM3xcs6T6JupdfYp3MArtX2o1mdkBcmbb53NArtGcmPp2poAWtucmr0Fv7

NvcBnduIB9cf2j5sYZfk6ZmIj1vk2A9R1p2WBit+8kvp2loAVtq8kHZCWlPS

Z5R8kbTuujM392WRCyz198vRP3oMb4MyWp7WhpVfzSBIM6Vil+dJHBeIXD5z

XDDZK7j9N7YBoeL5SoQIF.qKKDCdXmIf0EGA1huQv3ONcKOu62PvXj5iACq2

fj204yngIT1fK20AgwXsALuCxnAaogGrhy6.2IMrbmM3x8s6DTa.y6.2IaM7

XUv2+dS1ZXFB32ANS1N5BWzquDSLuLODxv4ucwo2VmvuMOK2126HBMa3SwsG

ASzRBBeUXyEoD7gK5KF.AFNSMvfHfkLc517sBpJQf86nnps21.6SDl.Ey1ov

8AM4okYqZLLZ1zBFcEtfv7hnj1MX0G2usOLj0zczRAQRonZJYFtZRJpdfyxg

Ed5DK7FCV3nKr.IoTT83V0FVz7kKGVPzkTHqYgNsJFCPf0jP3JoP3nQfvYL.

ARW8FxhD.cBEPYMJZBroGovVVo.oQoP5vUZTFrGioostDBYcPrc0ITHqChsN

GJ0dTCkpKo.KKTnSYvZLHAPWRgzihoy.VXoSpPmCniQioGApKoP5XV5L3MVV

WDrV8QjMlEQmRgzyDRixfrgM0YTShz9G5bDDrrPwwWWZV.+.cgeakLxvRl8a

qj0iaeqnQdiEM3vhlyaqn0SBdshl6aqngFVxPusRVOSRoUzfuwhFXXQC71JZ

8LM2VQyp2Un7QwCE5hpC9Wvy7C43y9EEYQKKK3qbo3obcTGFuMwoK8iO3Dz0

2Y06w8B2aFSfQbTy4JmfALFx3VyDXDW0vDX0TW2cBQfQ7TzY.uVquO3ALGjh

3D.tVOAnArZpt5JoxHGa0PkQDNKE1OEfUeNZzCaI7gJl+3mDH0QICdoH58Ax

MH5mKD.ybgvLWHLyEBybgvaEWH7CPSC3zkJDNjaHmYBgYlPXJyDBWk6j1IBg

6RuoYdP32o7fv03KoeVP39zWZlDD9cKIHfmvbfv8IA8LyABybfvLGHLyAByb

fvaiuzLGHLyABybfvLGHLyABybfvLGHLyABybfvLGHLyABybfvLGHLyABybf

vLGHLyABus6G54cQ9Deu2OcOvBS3S4wD9rwLgOQQS2ig0M+nqMMNZeSgC43L

gZLgN0qSiy+6D4rPOMNW3SiyH+zfu.lFbmvzfGIl.LpwzfaQlH7rxzfyYlBz

uyTfGhlFLxzLCxM4XpqIA+FNIXSuIAGKNMXQtYF.Uorr3LGHLvNa7Jo.AHx0

DOvwmViLffiZH.AHDa5PEeLh+nAvrcADhe3XmdLf..oni9csZWS7CWIoGPcb

JntGm7372m1fZYwhc9YTukhvrm4GTVwdMkbH2Q7G7mcy6prysfQrCFKoQnVE

G5mMbmKyGs6Fdi9G9M0K.QFQ2s8YAAGGSBR3k8SM0bwc8CEN5z.Q+JqhBHA3

aas9CHg0h1leJW59UVaEorDHyQtes8J4xjxsKCyNIgkLrqMTQt1vyq61ttlh

l0HfySMO1esviKUc2RPlKCQbIHqd66Qik3RZ0+93wFfMVKLWh+mM7CB1kRic

YD0RoKiy3G5MB.3Tw1sGl3VPZQ8WkVlTbBeh5H5M+u3d5nC.zO8MgGabc9d8

oWxmxinC8ONJuXcYRRX7fPP0kzuRSTTtKVnpDNgDKyNJsakWuVz5cgge96m2

Vu6dapipiUjAOW06uCGoCUuflVS7YIpo9U5wvOSvyYjCA1lhN4XBKHmEyTP0

imuNhNeP+j70oYa2SaUUDY0QjXkAXjj2jhHdNKBi345k7lfXr9nyqsA4e+JY

tKjhHtqZLn2HADac.AKKWuNLaer.ieL2e6t78mhighJbpbkfpwOw1hsqt.Pj

ITLc.2JqD7sje2BNEklImIBPMlH0YHzGk2Av8SsaLoo6tXiCQGtlU0.0wOoA

K9SXqVpjXWmbvlU6nNGYaYmNqruTaHsEai3Iyk2PRfdUQTsgdGiXdt70H4D0

AvN8vkbV.nIt2JUMBzckzGZykI7zUt3VBICVCbUPKApeF5mtW0QAsDTJ6GnJ

ZIGYZIUzOwdhBmE8rTgNAjwhvSE5DVFqbEzPDYzHrJznwzRCD4xiEjAZAMgh

i+YSG+i.3K2YOUdsBtsLBNQAPjTA2Id8OtB35ZZaI7gbTRTUorCTQC4JiKDQ

UpzYC.IrOottVBctVRGIdHUKCzgoIqkOWxVtctlCha.cQl.hv+A83GeJOwoa

RbplBJK2ndp6ZCyJS7bnsBLFsuUMjmDMjJRNxUlbswJpgNmBoBfCHSFrpHIB

jDgkHpHqRhL1BDUXLPjIqRGUzKguUCScyZH6aUCI0LzfppkNWrA.RGiJA8tQ

nITlvRLoAbssjLYlAUQjVnTCR4ppV5bVHPUjDHPlj2UQvVfDQ0QGOaW9hdc.

i8W0FGvT+GvR+GyP+CyN+GxL+rMhEmjxOXg1Z23Ta8ekzxO+MzeeW.ve0pvj

hUowbo5iFVlz46QeC4AAjpO4X44ZYS6mE9kLnZELY2yyQIUZbXy8Z8jvah2w

xMBsAnt152rLwMf4S0cVYTg5P9WewVpzG0jrsXmaGEV3LS2uJ2QYsgHbUBqV

lHGH1l8I5zZwfZwWYhUmGUR+B1kfpqihiaUHw8cWyJFuXSlePT04Qt8GwgpK

Gz1aWkxtyS89If3uvAraC1baNttvp0lruOc7s0bWPOKfGypBUaUU8IZQfCtK

+jM0+VH3HPn+6xR2kl09iBgIxq85KKRaUz1CsbyFDr+tutdGx0KJdfykv5h0

mQmgimEj8IpNyd35WlkEOHy.+Fb73u83+GDTrc4A

-----------end_max5_patcher-----------